"Waterfall" doesn't mean what you think it means

here's why I think it's far superior to anything that we’re doing today

Editor’s Note: After publication, Kris joined us on Changelog & Friends to discuss this article, his dislike of the “tech debt” analogy, why documentation matters so much & how everything is a distributed system. Early reviews of that episode have included the words “great” and “awesome”…

On Go Time #281, my Unpopular Opinion was about the software development method outlined in the waterfall paper. There was a brief exchange between myself and Johnny:

Kris: So I read the “Waterfall” paper, and I will say that the method of doing engineering described in that paper is far superior to anything that we’re doing today.

Johnny: I heard Waterfall is superior to all.

Kris: That might be what you heard. Is that what I said? That’s what you heard though…

The Unpopular Opinion segment of the show is a lot like No Nuance November, in that the opinions that are offered generally have deep truths to them, but we strip away that nuance to provide a quick soundbite that we can have our audience vote on. As expected, this opinion was unpopular, but this is a case where adding the nuance is quite important.

This post expands on my Unpopular Opinion, providing the background for how I arrived at it, adds some suggestions for improving your software development process, and includes a bonus Unpopular Opinion at the end.

Background

The first step of adding nuance is to define the terms that we use. Let’s start with “waterfall”. To help put together a slightly more broad definition of waterfall I asked the other hosts of Go Time how they define the waterfall development model. Here’s what they said.

Angelica: A process where all planning work is done before any code is written and where adaptation to unexpected circumstances or changes in requirements are difficult to incorporate.

Mat: Waterfall is a development process with big upfront design where all design is completed before moving to subsequent steps, in which some people are excluded, and there is no iteration

Jerod: Big up front design and only move forward

If we aggregate these definitions, we can break the waterfall model down into three components:

- There are explicitly delineated stages in the process. The first is a large design stage.

- Each step has a single subsequent step, i.e. no step has two options for the following step.

- Once a step is completed, we do not return to it at any point in the future.

How does this understanding match with the sources of the definition?

If we use Wikipedia as the general consensus for the history, not well at all. There are three papers that Wikipedia cites as establishing the phases used in waterfall along with the actual term itself:

- Production of Large Computer Programs by Herbert D. Benington

- Software Requirements: Are They Really A Problem? T.E. Bell and T.A. Thayer

- Managing The Development Of Large Software Systems by Winston W. Royce

I think these three papers are actually sufficient to explain not only our misunderstanding of waterfall, but also where it came from. Additionally, I said that the methodology in Royce’s paper specifically is superior to what we do today, but I’d like to expand it to encompass all three of these papers. The papers are similar in what they suggest, and combined provide useful additions to software engineering processes.

Production of Large Computer Programs

Benington’s paper was published in 1956 and then republished with an editor’s note in 1983. This paper is cited as the first one describing software development phases that resemble waterfall.

However, this paper doesn’t describe the entire development process, only a portion of it1. To this point, Benington’s editor’s note clarifies that in the original paper they “[omitted] a number of important approaches”. One of the significant omissions is that they built a prototype with the main purpose of understanding as much of the problem space as they could before they built the system. If I were to speculate, I would say that people believing this paper describes waterfall were assuming that it described the entire development process. This is a good example of why we shouldn’t make assumptions based on the absence of information2.

The editor’s note of the paper helps us understand where our current software development practices have gone awry. Benington felt that the main reason their software project was successful was because those building it were engineers trained in formal engineering3 who used structured programming and a top-down approach4.

He cites three reasons why subsequent projects missed their schedules and had large cost overruns:

- Programmers actively distanced themselves from formal engineering.

- Organizations attempted to manufacture software, instead of design it.

- No matter how much you plan, unexpected problems will arise while building.

The second reason is the most notable. In Benington’s assessment, the problem with software development was not that it was top down, but that it lacked prototyping. The issues with the specifications were that you needed to write all of them before you wrote any code. He thought this method was “terribly misleading and dangerous”.

The major theme in both the paper itself and the editor’s note is that software development requires far more documentation than was being produced by the processes in use. This was true for the process they used to produce SAGE in the 1950s and was still true when Benington wrote the editor’s note in the 1980s. It is still true to this day.

Software Requirements: Are They Really A Problem?

Bell & Thayer’s paper was published in 1976. As the title suggests, this paper was a research effort to determine if software requirements were an issue for software development. This paper originates the term “waterfall”:

[An] excellent paper by Royce [introduces] the concept of the “waterfall” of development activities.

This brief reference is likely what started the confusion around Royce’s paper. Context is important here: Bell & Thayer were primarily concerned with requirements analysis and documentation. Their usage of material from Royce’s paper is narrowly focused on requirements related topics. Their paper doesn’t comment on the entire software development process. That said, their paper is still relevant to modern software development processes outside of it establishing the term “waterfall”.

The conclusion they reached is that software requirements were insufficient, which causes the software developed to not solve the desired problems. Their solution: software requirements must be engineered and continually reviewed / revised. So this paper also states that software development processes require additional documentation and that an iterative approach is required.

Managing The Development of Large Software Systems

Royce’s paper was published in 1970. I won’t bury the lede here, the conclusion of this paper is the same as the other two: write more documentation.

In the view of Royce, who’s view aligns with the authors of the other two papers, even if there exists quite a bit of documentation it is likely not enough and you should write more.

As his is the only paper that actually discusses a development process in depth, I think it’s important to cover a bit more of what he says. But first, let’s talk about an important concept.

An Interlude On Risk

In my research for this post, there was a general feeling around Royce’s claim of how risky and failure prone the process he describes is. I believe this is a misunderstanding of the way Royce sees risk. There’s a fantastic book by Tom DeMarco and Timothy Lister titled Waltzing with Bears, in which they discuss managing software projects. There’s one particular quote I’d like to point out from the first chapter:

If a project has no risks, don’t do it. Risks and benefits always go hand in hand.

Risk is unavoidable, but that doesn’t mean we have to blindly accept its chaotic outcomes5.

This is the sense of risk used in Royce’s paper. So when he writes:

“I believe in this concept, but the implementation described above is risky and invites failure.” (emphasis mine)

He isn’t saying that the process is bad, he’s saying the inherent risk hasn’t been contained and mitigated. This is clarified two paragraphs later when he writes:

“I believe the illustrated approach to be fundamentally sound. The remainder of this discussion presents five additional features that must be added to this basic approach to eliminate most of the development risks.” (emphasis mine)

To emphasize this point, earlier in the paper he declares that the two step process he initially outlined is dangerous for large software projects:

“An implementation plan to manufacture larger software systems, and keyed only to these steps, however, is doomed to failure.”

Royce’s Software Development Process

So what is it about Royce’s software development process that I find to be far superior to anything we’re doing today? Let’s start with one of his first pieces of advice: if the basic process works for you, then use the basic process.

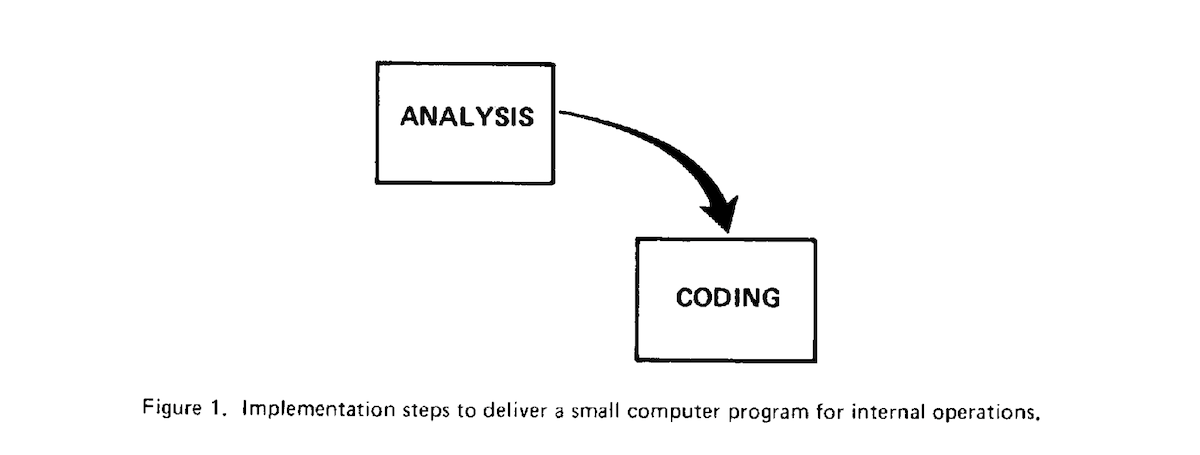

He states that there are two crucial stages to software development: analysis and coding. Translated into modern language, these roughly equate to the work done by product managers and the work done by software engineers, respectively.

These make up what I’ll call the basic process for software development.

Royce emphasizes that these are stages that businesses are happy to pay for and people are happy to do. But in his view, an entire development process made of only these two stages only works for small software projects. In particular, projects that will be operated by the software engineers who built them. Larger projects or those which will be operated by another party (such as an operations team) are “doomed to failure” if they only use these two steps.

His view here is pragmatic: he believes that if these stages work for you then you’d be wasting money doing additional stages. However, in his experience, writing large software systems always requires additional stages.

Royce suggests adding 5 stages to the process:

- system requirements

- software requirements

- code design

- testing

- operation

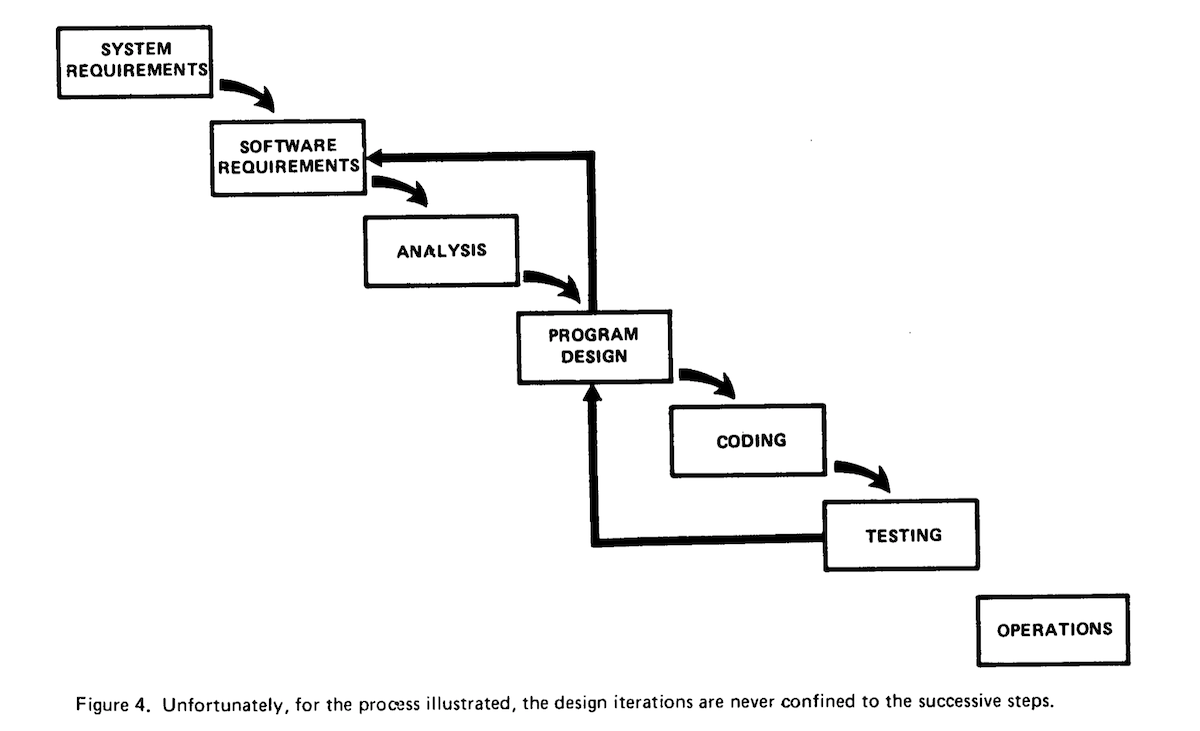

He notes that these are steps that businesses don’t want to pay for and people don’t want to do, but are nonetheless required. So the total process is 7 steps: system requirements, software requirements, analysis, program design, coding, testing, and operations. He notes that there is an iterative relationship between these steps.

Yes, you read that right, the “waterfall” paper describes an iterative development process, and Royce says this on the very first page of the paper. That means all three papers that are used to describe the history of waterfall software development advocate for iterative software development. He notes that the iterative relationship is between the preceding and succeeding steps and leads to a maximization of salvageable work from earlier stages and keeps change process “scoped down to manageable limits.”

He then discusses the problem with this process: testing occurs too late. This is often the main critique of waterfall development and I’ll illustrate with an example:

If your business requirements state that you need to handle 100,000 concurrent users, and you’ve done the analysis, design, and coding to implement a system you believe will handle 100,000 concurrent users… Until you actually do the testing you have no idea if the system will actually be capable of satisfying this requirement.

Royce provides a figure representing this:

A main thing to note is that the backward iterative cycles skip the coding and analysis stages. There’s a bit of nuance as to why he does this. Royce believes that analysis (product management) and coding (software engineering) departments are well staffed and that generally they have little impact on requirements, design, and testing. To continue with the previous example, if the system we tested cannot handle 100,000 concurrent users, it is much more likely that the issue lies within the design or business requirements than within the analysis or code. The well-staffed aspect also means that if there is a problem with the analysis or code, these changes will be easy enough to make that it will have little impact on the overall process. In Royce’s view, if a problem is discovered during testing…

“Either the requirements must be modified, or a substantial change in the design is required. In effect the development process has returned to the origin and one can expect up to a 100-percent overrun in schedule and/or costs.”

This is where that risk tradeoff comes from. The high risk is that if you don’t get everything right, then you wind up with a large overrun in time and money, but the reward is that if you do get everything right, you’ll have built software with minimal time and minimal cost. Instead of crossing one’s fingers and hoping that they get everything right, Royce suggests adding 5 additions to the process.

Together, the additions constrain the potential overrun in time and money while increasing the time and costs by a minimal amount.

Royce’s 5 Risk Management Additions

The language that Royce uses is rather direct and requires a bit of nuance. To put this nuance at the forefront, each of his additions are titled below with my interpretation followed by Royce’s original titles (in parentheses).

1. Do a design first (“Program Design Comes First”)

Royce suggests adding a stage between the Software Requirements and Analysis stages. He calls this the Preliminary Design and its purpose is to gather information, not to be a correct nor final design. This design guides analysis and prevents analysis from continuing when there are issues with requirements.

Returning to our 100,000 concurrent users example, we could write a preliminary design where we choose basic technologies that we want use6 along with assessments of what we can build, test and operate7. If it turns out that it’s not feasible to build the system to handle 100,000 concurrent users with current resources or knowledge, then it’s much better to return to the business requirements step than to discover this after product management have already created a set of PRDs.

Royce notes that this must be a written document, since “at least one person must have a deep understanding of the system which comes partially from having had to write an overview document”. This echoes a similar recommendation from Benington, who sees having a chief engineer as a major component for the success of a project.

2. Write docs. No, that’s not enough. Write more (“Document The Design”)

As already mentioned, Royce feels strongly about documentation. He says that if during a project review he discovers documentation is in serious default he would:

“Replace project management. Stop all activities not related to documentation. Bring the documentation up to acceptable standards”.

He gives three reasons why so much documentation is required:

- Written communication is tangible, verbal communication is not. By writing extensive documentation we can refer to a concrete thing and can avoid people continually hiding behind the “I’m 90% done” excuse.

- During the early stages, the documentation is the design and the specification. If there’s no documentation then you haven’t actually designed or specified anything. He quips, “if the documentation does not yet exist there is as yet no design, only people thinking and talking about the design which is of some value, but not much”.

- Documentation enables those who specialize in testing, operation, and maintenance to do their work. If there is a lack of documentation, those parties must consult with the designers and programmers. This leads to analysis of issues being done by the same person who likely created the issue in the first place.

Royce suggests six documents that should be written throughout the software development process, five of which should be completely up to date when the software project is delivered. (Bell & Thayer suggest having up to date documentation as well.)

3. Prototype the hard parts (“Do It Twice”)

Royce’s original title is quite misleading. He’s actually arguing for doing prototypes, but only for areas with a high amount of uncertainty.

How do we know if we’re unsure? Well, many of us have been in the room when a project manager asks for an estimate and one person says “three hours” and another person says “two weeks” for the same task. The solution to this problem is to prototype and gain enough information such that you no longer need to rely solely on human judgement.

This addition pairs well with the first two, as documentation from previous projects can aid us in estimating what a similar component of a new project will require. By doing a preliminary design we can figure out what things we need to prototype8. Royce specifically says to not prototype the things that you already know how to build or the things that are straightforward.

4. Plan your testing and do more testing (“Plan, Control, and Monitor Testing”)

While the previous three steps are meant to reduce the surprises found during testing, that doesn’t obviate the need to do testing. Some of Royce’s advice is quite antiquated9, but it reduces down to having testing specialists handle testing since they’ll be cheaper and do a better job; do code reviews; thoroughly test your code10; and do a final set of tests with sign offs.

5. Communicate with the business11 (“Involve The Customer”)

Royce notes that while the business should be included, they shouldn’t have free rein. After the requirements stages, he suggests having three designated places where the business is solicited for input: after the preliminary design, during program design, and after testing in the form of final software acceptance test.

Review

And that’s it. In Royce’s view, adding these steps to the software development process he outlined will “transform a risky development process into one that will provide the desired product”. I agree with Royce that these stages and additions are required for large software projects.

That said, I don’t think that we should just directly take a development process that one person found useful and use it everywhere. We should extract the valuable advice and use it to build new processes, ones that give us pathways to discovering more ways to manage high risk/high reward projects. I believe this paper contains three such valuable pieces of advice that will improve most (if not all) software development processes:

- Acquire as much information as you can as early in the process as possible.

- Document everything. This includes the design, specifications, communication, project planning, and anything else that might be useful in improving the development process in the future. Documents that are formal parts of the process, such as requirements or design documents, should be iterated on and up to date versions should be delivered when the project completes.

- Assign separate staff for separate stages. At a minimum, the person who wrote the code shouldn’t test it, nor should they operate it, but ideally a separate staff of specialized people test and operate the software.

I think the second piece of advice is the most valuable of the three.

Many of us have sat in a retrospective meeting12 after a particularly long project and found it difficult to remember all of the things that we wanted to say. There might be something that really frustrated us that we no longer remember, or something that was fantastic that we quickly forgot. By documenting these things we not only aid ourselves in remembering for the meeting itself, but we also provide a way for people in the future (including ourselves) to go back and review a previous project. This is what helps build organizational momentum and institutional knowledge, helping future projects require less time and fewer resources.

The third piece of advice might seem obvious, but I’ve been a member of several organizations where engineers are allowed to review their own code. If you have the same group of people doing a separate stage, you don’t have a separate stage, you’ve just drawn an extra box that isn’t meaningful. If the people writing the code also perform the testing, you don’t have a separate testing stage. The same is true if you have programmers operating the software.

Modern Day Interpretation

While I don’t think that we should directly use Royce’s software development process, I do think that it is a good place to start and superior to what most of us are doing today. Below are the steps of his process but updated for a modern understanding:

- Requirements (Business Requirements Documents)

- Rough Constraining Design & Prototyping

- Product Analysis (Product Requirements Documents)13

- Software Design (Software Design Documentation, including testing and operation)

- Software Programming

- Software Testing

- Software Delivery or Software Operation (depends on product type)

- Software Maintenance

Conclusion

If there is only one thing that you take away from this article, I hope that it is this:

Documentation is the key to a successful software project

Not only do the referenced authors heavily emphasize this point, but I believe the massive success of open source provides us with evidence of what happens when we focus on documentation. The open source ecosystem lives and dies by documentation. Projects that spend time to develop high quality documentation are often far more successful than competing projects that might be higher quality in terms of functionality or code.

An example of this is the PHP programming language and the ecosystem around it. I started my career in PHP and Drupal, and the main thing that pulled me in was the comprehensive documentation. Many of us joke about how so much of our job is “doing a web search, finding a Stack Overflow post, and doing what’s suggested”, and this is yet another example of how impactful an abundance of documentation can be.

In this article we defined the waterfall development methodology, discussed its origins including the “waterfall” paper, did a review of the paper itself along with associated papers, and suggested some ways to enhance your current software development process. I hope that over the course of this post, I’ve convinced you that the “waterfall” paper includes some great advice that can be used to improve our software development processes.

Thanks for taking the time to read this post and let me know your thoughts in the comments below.

Unpopular Opinion Redux

You didn’t think I would leave you without a new Unpopular Opinion, did you?

The way I defined waterfall at the beginning of this post specifically stated that once you progress from one stage, you never return to it. My unpopular opinion is that most software development actually follows this and that when we say that we’re iterating we are actually just spinning around in one specific stage.

Not only does most software development resemble Figure 1 in Royce’s paper:

But even in the processes that have design stages in them, we rarely return to previous stages.

How many times have you actually gone back and updated the Business Requirements Document (if it even exists) or a Product Requirements Document? How many times have you gone back and updated a design document? If we were actually iterating to previous stages wouldn’t we also be revising and updating these documents? If they aren’t part of the process why did we write them in the first place?

-

The paper documents the process used to build the SAGE computer system and only lightly touches on the development process. While the authors did document parts of the process, their conclusion is that the advancements made within the industry mean the process used was inadequate for future program development.↩

-

Another interesting note in this paper is that development cost $55 per instruction and that if he were to build the system again he would have planned to build a system with more than twice as many instructions, but would have directly translated the prototype, tested it thoroughly, and then iterated on the system. He believes this would have cut development costs in half.↩

-

"It is easy for me to single out one factor that I think led to our relative success: we were all engineers and had been trained to organize our efforts along engineering lines."↩

-

"In other words, as engineers, anything other than structured programming or a top-down approach would have been foreign to us."↩

-

Waltzing with Bears is a book about how to embrace risk and then how to contain and mitigate it. Doing so ensures that fewer surprises occur improving the chance of success and that we have enough structure in place to avoid the sunken costs fallacy.↩

-

A CDN provider, database management system, caching system, programming language, etc..↩

-

Even if your programmers can write the code to use a new database management system, can your testing team test it? Can your operations team reliably run it in production?↩

-

For example, your programmers might be able to design a data model and program against the newest database management system, but can you operations team actually run it? If they can, will it cost so much that it’s not worth it and you should use the database management system they’re already familiar with?↩

-

Especially his view that computer time is expensive. It was when he wrote the paper, but in the time since human time has become much more expensive than computer time.↩

-

Including all the branches, which might be overkill but projects like SQLite actually do this so it’s possible.↩

-

For many (perhaps most) of us, we are building software for the business that we work for, so I think the adjustment to “business” is appropriate here.↩

-

A retrospective meeting is one where a team reviews a project or process that has completed. These meetings are meant to help understand what went well, what didn’t go well, what should be done differently, and what should kept being done.↩

-

Prototyping may run concurrently with Product Analysis.↩

Discussion

Sign in or Join to comment or subscribe

Jerod Santo

Bennington, Nebraska

Jerod hosts Changelog News, co-hosts The Changelog & takes out the trash (his old code) once in awhile.

2023-08-24T14:14:48Z ago

TIL there’s people out there that actually read papers?! 😜

Kris Brandow

New York, New York

2023-08-24T14:25:37Z ago

With a highlighter and pen too 🤓 need to make sure those papers look colorful when I’m done with them 😤