Auto-improved transcripts with GitHub Actions

or, how I taught Logbot to change Changelog's logs like magic

I love automation. It’s just magical when an application or a script *just does the thing* 100x faster than I could by hand and infinitely more accurate. Especially for mundane tasks. Magic.

I created a GitHub action that auto-formats Changelog’s episode transcripts. Here’s how. 👇

My backstory 📖

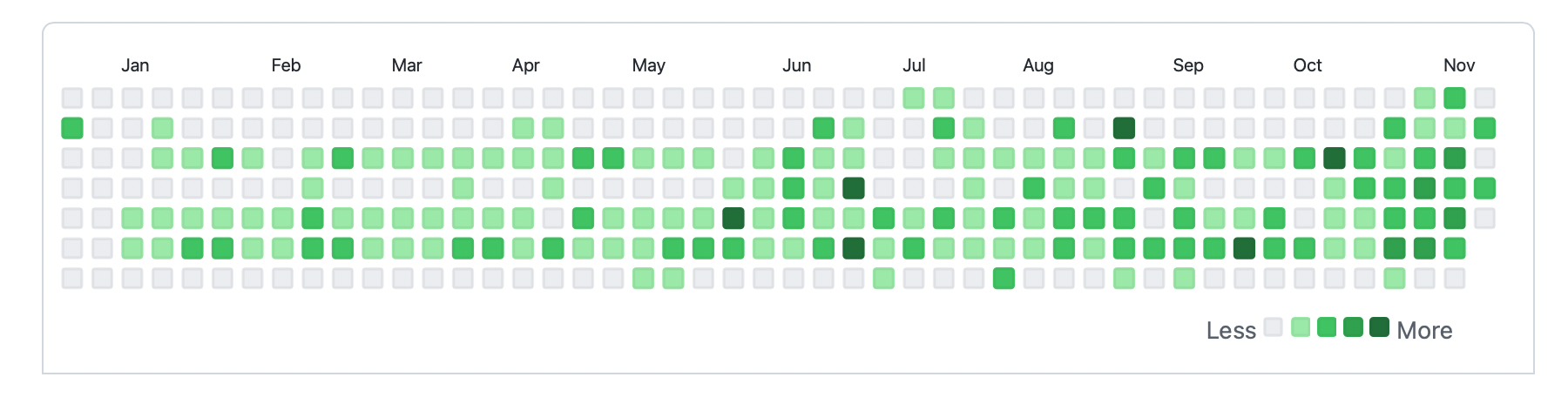

This was my first meaningful code contribution to open source!

It was an awesome experience for me. I’m a hobby coder, and podcasts like The Changelog played a big role in my journey learning to code. This made it even more exciting for me when I came across an issue requesting a GitHub action to auto-improve Changelog’s transcripts while scouring for potential contributions during Hacktoberfest. It was a special chance to contribute to something that I value.

I had played with GitHub Actions a little before, so I felt I knew just enough to give it a go…

The goal 🎯

The goal was a way to automatically apply certain style formatting rules to Changelog’s episode transcripts. There was an initial list of rules, which included fixing timestamps and changing javascript to JavaScript (because, as I learnt through this, that’s how you’re supposed to write it). Somebody will probably think of additional useful ones later, so it should also be simple enough to extend the solution.

The transcripts for Changelog are stored in markdown in this repo on GitHub.

The solution 💡

The main idea was to configure a GitHub Action that would run some code that would apply the formatting changes (per the issue description).

GHA? wha?!

GitHub Actions are a way to run code:

- in the cloud (i.e. on GitHub’s servers),

- when code is pushed to a repo (or on a schedule, or on various other triggers), and

- almost always for free (especially on public repos)

Actions are like hot dogs 🌭

Let me pause here to well actually myself: They’re actually called Workflows. Actions technically refer to single steps which build up to jobs which build up to workflows, but I’m just gonna refer to workflows as actions. Just like technically a hot dog is just the sausage and once it’s in a bun it’s a hot dog roll, but Imma just call the whole thing a hot dog. Actions are hot dogs.

🔙 to the solution

Anyway, back to the solution. It has three parts:

- a scaffold that finds and loops over all the transcript files

- a formatter that takes a markdown file and applies the required formatting to it

- a GitHub Action that runs the formatter on the repo whenever code is pushed

(and 2½: some tests for the formatter. This isn’t mission-critical code, so I didn’t aim for 100% test coverage. Still, testing the formatter was useful. More on this later.)

I also wanted to keep the solution lightweight and use technology that is ubiquitous. That makes it easy to maintain and enables as many people as possible to contribute.

This lead me to write the whole thing in Node.js, due to its ubiquity and power. I also managed to write this without using any dependencies*. In the spirit of staying lightweight it called the command line for globbing lists of files, and accessing git commands, rather than using an external dependency.

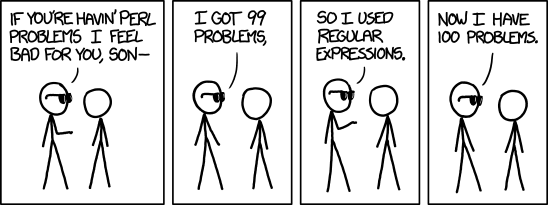

I used regular expressions (“regex”) for the core of the replacement logic. Regex is really powerful, but also very dangerous. Thankfully I was able to get it doing what I want it to do.

It turns out it was possible to get the entire solution working with about 50 lines of code, one workflow file to define our action, and about 50 lines of tests, mostly for the regexes (regecii? regecide?).

The code

You can find the the node code in the scripts folder and the action in the .github/workflows folder of the transcripts repo.

What was hard and what I learnt 👨🎓

I got there, but it wasn’t all smooth sailing. Here are some sharp edges I encountered and lessons I learnt along the way:

Writing testable code

This wasn’t a sharp edge per sé, but a practice that I’ve been trying to adopt as much as I can, that proved very useful here. I call it Test-driven development-lite: keeping “how would you test this” top of mind. I found this especially helped break my code up into small steps that make sense in isolation. These are easier to test and reason about and they can then be easily stitched together to build the overall solution.

Testing actions is tricky

This one was a sharp edge. As fair as I know, there is no easy GitHub-provided way to test GitHub Actions that I could find (If I’m wrong please tell me, I would love to learn about this).

The way I found around this was to first push the code to my fork of the repo and manually test it there. This is much clumsier than the tight feedback loop of automated unit testing, but at least it gave me a way of validating that it works as expected before pushing it to ‘prod’.

YAGNI 🙅♂️

If you succeed in writing small, contained units of code a new potential anti-pattern starts seducing you: building more than you need for your solution.

Two practical examples of this that I managed to resist in this project were:

- Not catering for use cases that you don’t have yet. E.g. I wrote a function to loop over text files, apply an transform and then write it back to the file. I could have generalised this for the case where it doesn’t write out to the file again. But I didn’t need it for this scenario, so I didn’t extend it.

- Balancing when to stop generalising. I made a hard coded function that returns all the

.mdfiles in the repo. I could have generalised this to accept any glob pattern. But this solution didn’t need that, so I resisted the urge.

GitHub just trusts the git CLI email address 🤷♂️

This was an odd one for me. I had to deal with it when figuring out how to have the formatting changes be commited as Logbot. First I tried using an auth token to have the action authenticate as Logbot, but this caused an infinite loop of action triggers. I then realised I didn’t have to do any of that, and could just use Logbot’s username and email in the git cli and it worked because of how GitHub counts contributions. It had never occured to me that there is no validation on the username and email you enter into git on the command line.

I also learnt it’s possible to view the email used to make commits to public repos. This is one to keep in mind when deciding which email address to use in the git cli.

Regexes are hard 🤔

Finally, as I mentioned before, regexes are hard. And clearly thinking through expressing intent is hard. For instance, an edge case was if part of a word appeared in the middle of another word. Testing came in very handy here once more. This worked well for expressing examples where the text was supposed to be replaced, and also places where it shouldn’t be replaced.

Wrapping up 🎁

Once again, this was an awesome experience for me. I made my first real code contribution to an open source repo and I learnt a lot along the way.

I also got to experience that magic feeling when your automation code runs and *just does the thing*. I still go and check to see it running on the repo occasionally, and I enjoy seeing it run every time.

Community 🌐

To top it all off, I also feel more part of the Changelog community. Jerod from Changelog was super welcoming and willing to chat me through the process and provide guidance (and I enjoyed chatting to someone who’s podcast-famous). Thanks Jerod!

Say hi 👋

If you’d like to have a chat to learn more about all this, please get in touch. If you have ideas how we can do this better, or if I got stuff wrong, please also get in touch - I’d love to learn more too!

Discussion

Sign in or Join to comment or subscribe